Key Takeaways

- AI-generated photographs are more durable to identify

- AI detection instruments exist, however are underused

- Artists’ participation is essential in stopping misuse

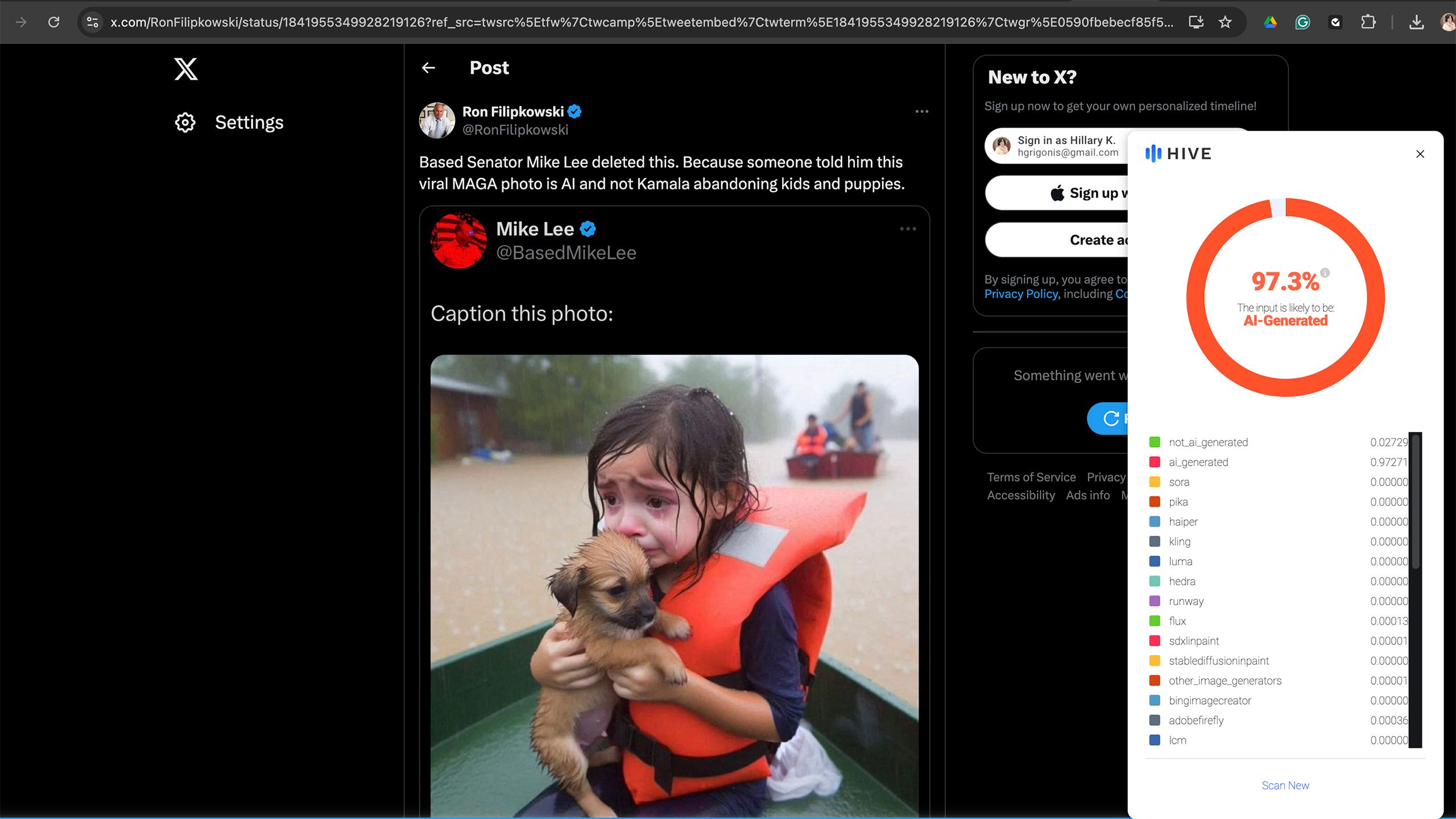

Within the wake of the devastation attributable to Hurricane Helene, a picture depicting slightly woman crying whereas clinging to a pet on a ship in a flooded road went viral as an outline of the storm’s devastation. The issue? The woman (and her pet) don’t really exist. The picture is considered one of many AI-generated depictionsflooding social media within the aftermath of the storms. The picture brings up a key problem within the age of AI: is rising sooner than the expertise used to flag and label such photographs.

Several politicians shared the non-existent girl and her puppy on social media in criticism of the present administration and but, that misappropriated use of AI is without doubt one of the extra innocuous examples. In spite of everything, as the deadliest hurricane in the U.S. since 2017, Helene’s destruction has been photographed by many precise photojournalists, from striking images of families fleeing in floodwaters to a tipped American flag underwater.

However, AI photographs meant to create misinformation are readily changing into a difficulty. A study published earlier this year by Google, Duke College, and a number of fact-checking organizations discovered that AI didn’t account for a lot of faux information photographs till 2023 however now take up a “sizable fraction of all misinformation-associated images.” From the pope carrying a puffer jacket to an imaginary woman fleeing a hurricane, AI is an more and more straightforward solution to create false photographs and video to help in perpetuating misinformation.

Utilizing expertise to combat expertise is essential to recognizing and finally stopping synthetic imagery from attaining viral standing. The difficulty is that the technological safeguards are rising at a a lot slower tempo than AI itself. Fb, for instance, labels AI content material constructed utilizing Meta AI in addition to when it detects content material generated from outdoors platforms. However, the tiny label is way from foolproof and doesn’t work on all varieties of AI-generated content material. The Content material Authenticity Initiative, a company that features many leaders within the trade together with Adobe, is growing promising tech that would depart the creator’s data intact even in a screenshot. Nonetheless, the Initiative was organized in 2019 and lots of the instruments are nonetheless in beta and require the creator to take part.

The picture brings up a key problem within the age of AI:

Generative AI

is rising sooner than the expertise used to flag and label such photographs.

Associated

Some Apple Intelligence features may not arrive until March 2025

The primary Apple Intelligence options are coming however a number of the greatest ones might nonetheless be months away.

AI-generated photographs have gotten more durable to acknowledge as such

The higher generative AI turns into, the more durable it’s to identify a faux

I first noticed the hurricane woman in my Fb information feed, and whereas Meta is placing in a larger effort to label AI than X, which permits customers to generate photographs of recognizable political figures, the picture didn’t include a warning label. X later noted the photo as AI in a neighborhood remark. Nonetheless, I knew instantly that the picture was possible AI generated, as actual folks have pores, the place AI photographs nonetheless are inclined to battle with issues like texture.

AI expertise is shortly recognizing and compensating for its personal shortcomings, nevertheless. When I tried X’s Grok 2, I used to be startled at not simply the flexibility to generate recognizable folks, however that, in lots of circumstances, these “folks” had been so detailed that some even had pores and pores and skin texture. As generative AI advances, these synthetic graphics will solely turn out to be more durable to acknowledge.

Associated

Political deepfakes and 5 other shocking images X’s Grok AI shouldn’t be able to make

The previous Twitter’s new AI device is being criticized for lax restrictions.

Many social media customers do not take the time to vet the supply earlier than hitting that share button

Whereas AI detection instruments are arguably rising at a a lot slower fee, such instruments do exist. For instance, the Hive AI detector, a plugin that I’ve installed on Chrome on my laptop computer, acknowledged the hurricane woman as 97.3 % more likely to be AI-generated. The difficulty is that these instruments take effort and time to make use of. A majority of social media searching is finished on smartphones somewhat than laptops and desktops, and, even when I made a decision to make use of a cellular browser somewhat than the Fb app, Chrome doesn’t enable such plugins on its cellular variant.

For AI detection instruments to take advantage of important impression, they must be each embedded into the instruments customers already use and have widespread participation from the apps and platforms used most. If AI detection takes little to no effort, then I imagine we might see extra widespread use. Fb is making an attempt with its AI label — although I do suppose it must be rather more noticeable and higher at detecting all varieties of AI-generated content material.

The widespread participation will possible be the trickiest to attain. X, for instance, has prided itself on creating the Grok AI with a free ethical code. The platform that’s very possible attracting a big proportion of customers to its paid subscription for lax moral pointers equivalent to the flexibility to generate photographs of politicians and celebrities has little or no financial incentive to affix forces with these combating in opposition to the misuse of AI. Even AI platforms with restrictions in place aren’t foolproof, as a study from the Center for Countering Digital Hate was successful in bypassing these restrictions to create election-related photographs 41 % of the time utilizing Midjourney, ChatGPT Plus, Stability.ai DreamStudio and Microsoft Picture Creator.

If the AI firms themselves labored to correctly label AI, then these safeguards might launch at a a lot sooner fee. This is applicable to not simply picture technology, however textual content as effectively, as ChatGPT is engaged on a watermark as a solution to support educators in recognizing college students that took AI shortcuts.

Associated

Adobe’s new AI tools will make your next creative project a breeze

At Adobe Max, the corporate introduced a number of new generative AI instruments for Photoshop and Premiere Professional.

Artist participation can also be key

Correct attribution and AI-scraping prevention might assist incentivize artists to take part

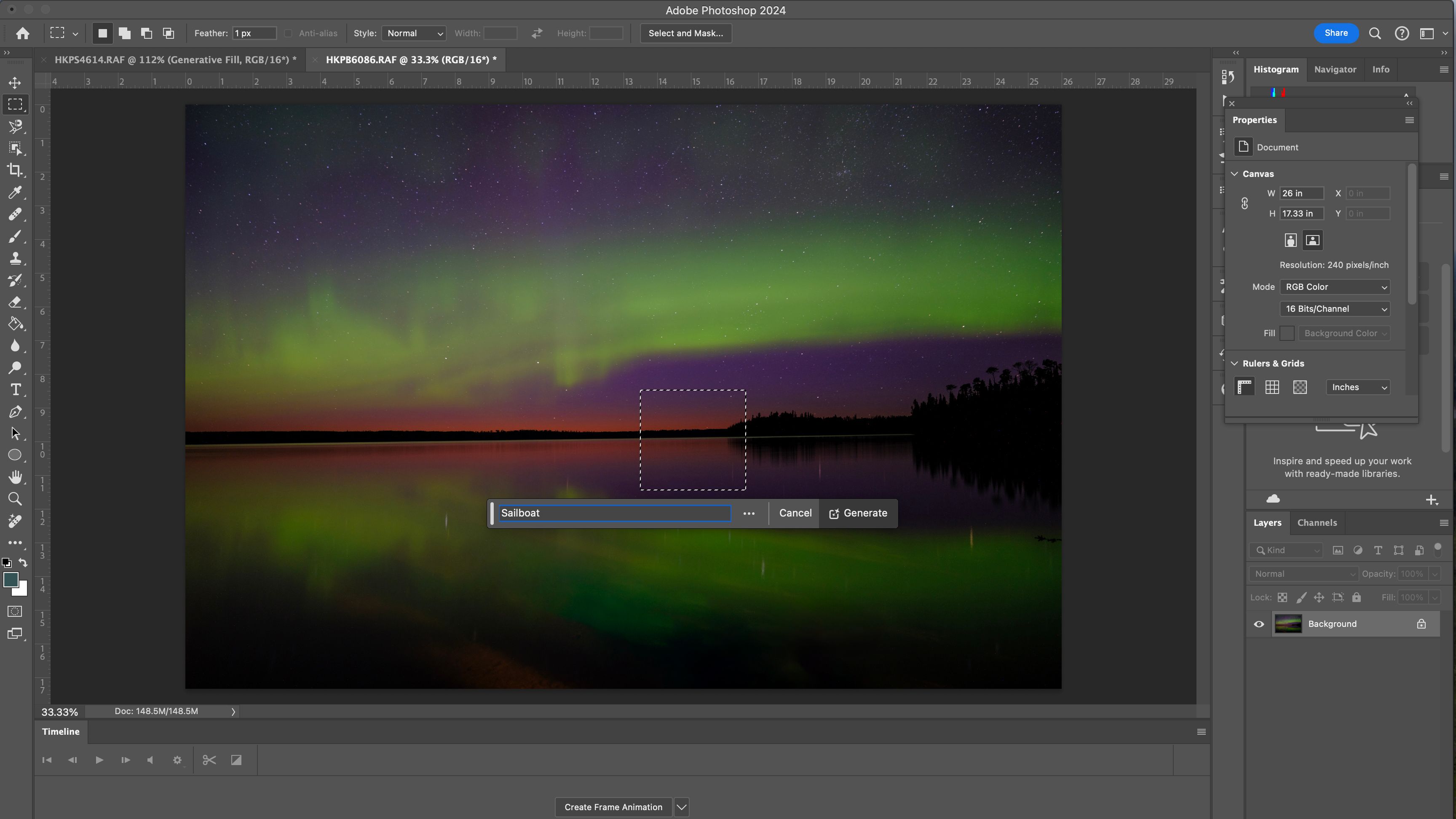

Whereas the adoption of safeguards by AI firms and social media platforms is crucial, the opposite piece of the equation is participation by the artists themselves. The Content material Authenticity Initiative is working to create a watermark that not solely retains the artist’s title and correct credit score intact, but additionally particulars if AI was used within the creation. Adobe’s Content material Credentials is a sophisticated, invisible watermark that labels who created a picture and whether or not or not AI or Photoshop was utilized in its creation. The info then might be learn by the Content Credentials Chrome extension, with a web app expected to launch next year. These Content material Credentials work even when somebody takes a screenshot of the picture, whereas Adobe can also be engaged on utilizing this device to forestall an artist’s work from getting used to coach AI.

Adobe says

that it solely makes use of licensed content material from Adobe Inventory and the general public area to coach Firefly, however is constructing a device to dam different AI firms from utilizing the picture as coaching.

The difficulty is twofold. First, whereas the Content material Authenticity Initiative was organized in 2019, Content material Credentials (the title for that digital watermark) continues to be in beta. As a photographer, I now have the flexibility to label my work with Content material Credentials in Photoshop, but the device continues to be in beta and the online device to learn such information isn’t anticipated to roll out till 2025. Photoshop has examined a variety of generative AI instruments and launched them into the fully-fledged model since, however Content material Credentials appear to be a slower rollout.

Second, content material credentials gained’t work if the artist doesn’t take part. Presently, content material credentials are optionally available and artists can select whether or not or to not add this information. The device’s skill to assist stop scarping the picture to be skilled as AI and the flexibility to maintain the artist’s title connected to the picture are good incentives, however the device doesn’t but appear to be broadly used. If the artist doesn’t use content material credentials, then the detection device will merely present “no content material credentials discovered.” That doesn’t imply that the picture in query is AI, it merely implies that the artist didn’t select to take part within the labeling characteristic. For instance, I get the identical “no credentials” message when viewing the Hurricane Helene pictures taken by Related Press photographers as I do when viewing the viral AI-generated hurricane woman and her equally generated pet.

Whereas I do imagine that the rollout of content material credentials is a snail’s tempo in comparison with the fast deployment of AI, I nonetheless imagine that it may very well be key to a future the place generated photographs are correctly labeled and simply acknowledged.

The safeguards to forestall the misuse of generative AI are beginning to trickle out and present promise. However these programs will must be developed at a a lot wider tempo, adopted by a wider vary of artists and expertise firms, and developed in a approach that makes them straightforward for anybody to make use of with a purpose to make the largest impression within the AI period.

Associated

I asked Spotify AI to give me a Halloween party playlist. Here’s how it went

Spotify AI cooked up a creepy Halloween playlist for me.

Trending Merchandise

Lenovo New 15.6″ Laptop, Intel Pentium 4-core Processor, 40GB Memory, 2TB PCIe SSD, 15.6″ FHD Anti-Glare Display, Ethernet Port, HDMI, USB-C, WiFi & Bluetooth, Webcam, Windows 11 Home

Thermaltake V250 Motherboard Sync ARGB ATX Mid-Tower Chassis with 3 120mm 5V Addressable RGB Fan + 1 Black 120mm Rear Fan Pre-Installed CA-1Q5-00M1WN-00

Sceptre Curved 24-inch Gaming Monitor 1080p R1500 98% sRGB HDMI x2 VGA Build-in Speakers, VESA Wall Mount Machine Black (C248W-1920RN Series)

HP 27h Full HD Monitor – Diagonal – IPS Panel & 75Hz Refresh Rate – Smooth Screen – 3-Sided Micro-Edge Bezel – 100mm Height/Tilt Adjust – Built-in Dual Speakers – for Hybrid Workers,Black

Wireless Keyboard and Mouse Combo – Full-Sized Ergonomic Keyboard with Wrist Rest, Phone Holder, Sleep Mode, Silent 2.4GHz Cordless Keyboard Mouse Combo for Computer, Laptop, PC, Mac, Windows -Trueque

ASUS 27 Inch Monitor – 1080P, IPS, Full HD, Frameless, 100Hz, 1ms, Adaptive-Sync, for Working and Gaming, Low Blue Light, Flicker Free, HDMI, VESA Mountable, Tilt – VA27EHF,Black